Always Failing widget in custom dashboards

Monitor tests in your test suites that always fail.

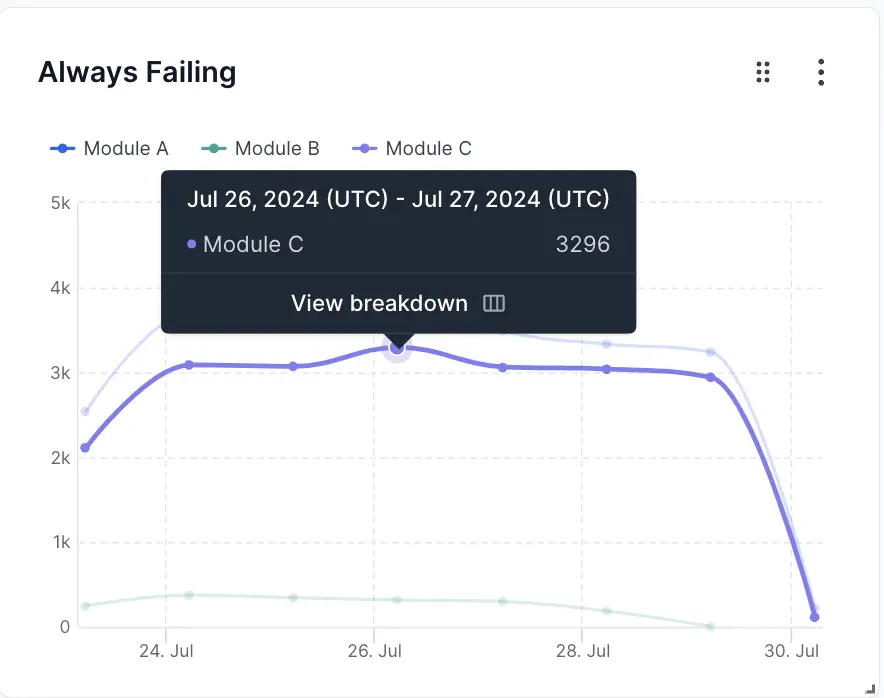

This widget illustrates the trend of the number of tests that continuously fail over time in your test suite. Tests are classified as always failing as per the conditions set in the Always Failing Smart Tag. An increasing number of Always Failing tests indicates that the effectiveness of the test cases in your automation tests is decreasing.

The Always Failing widget is a collection of one or more line charts in which the X-axis represents time and the Y-axis represents the number of Always Failing tests. Each line is a different segment that you can configure to compare different projects, builds, users, etc.

In the sample above, there are two segments: Always Failing A (yellow line) and Always Failing B (blue line). The value of Always Failing A increases from 1217 on 11th January to 3488 on 14th January. Similarly, the value of Always Failing B increases from 441 to 948. This spike in the number of Always Failing tests on 14th January warrants a deeper audit. You can also see that both lines have other spikes around 21st January and 27th January. The insights from such an analysis could help reduce the number of Always Failing tests in your test suites in the future.

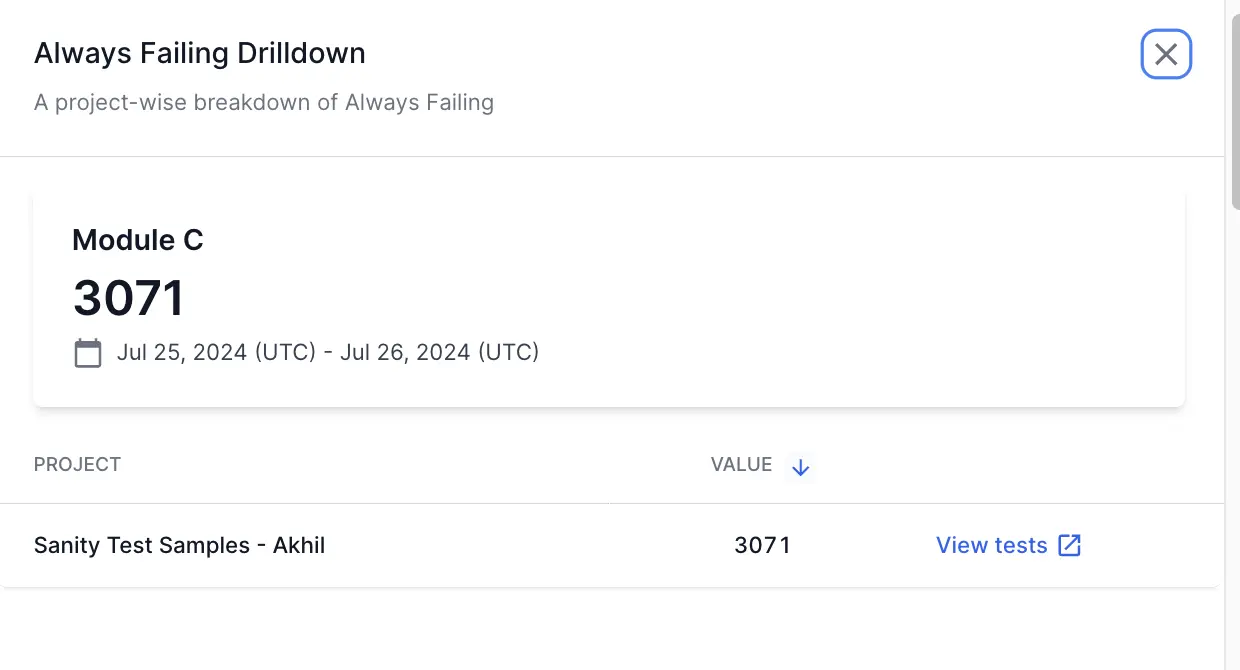

Drill down for more information

Test Observability enables you to investigate more contextual information on all dashboard widgets using the drill-down feature.

You can use the drill-down feature in the Always Failing widget to analyze more details on the reasons for test failures. For example, if you see a spike in the number of tests that always fail, you can investigate why the spike occured.

Follow these steps to use the drill-down feature:

- Hover on any point in the Always Failing widget and click View breakdown. A project-wise breakdown of the metrics for the selected date range opens up in a side pane.

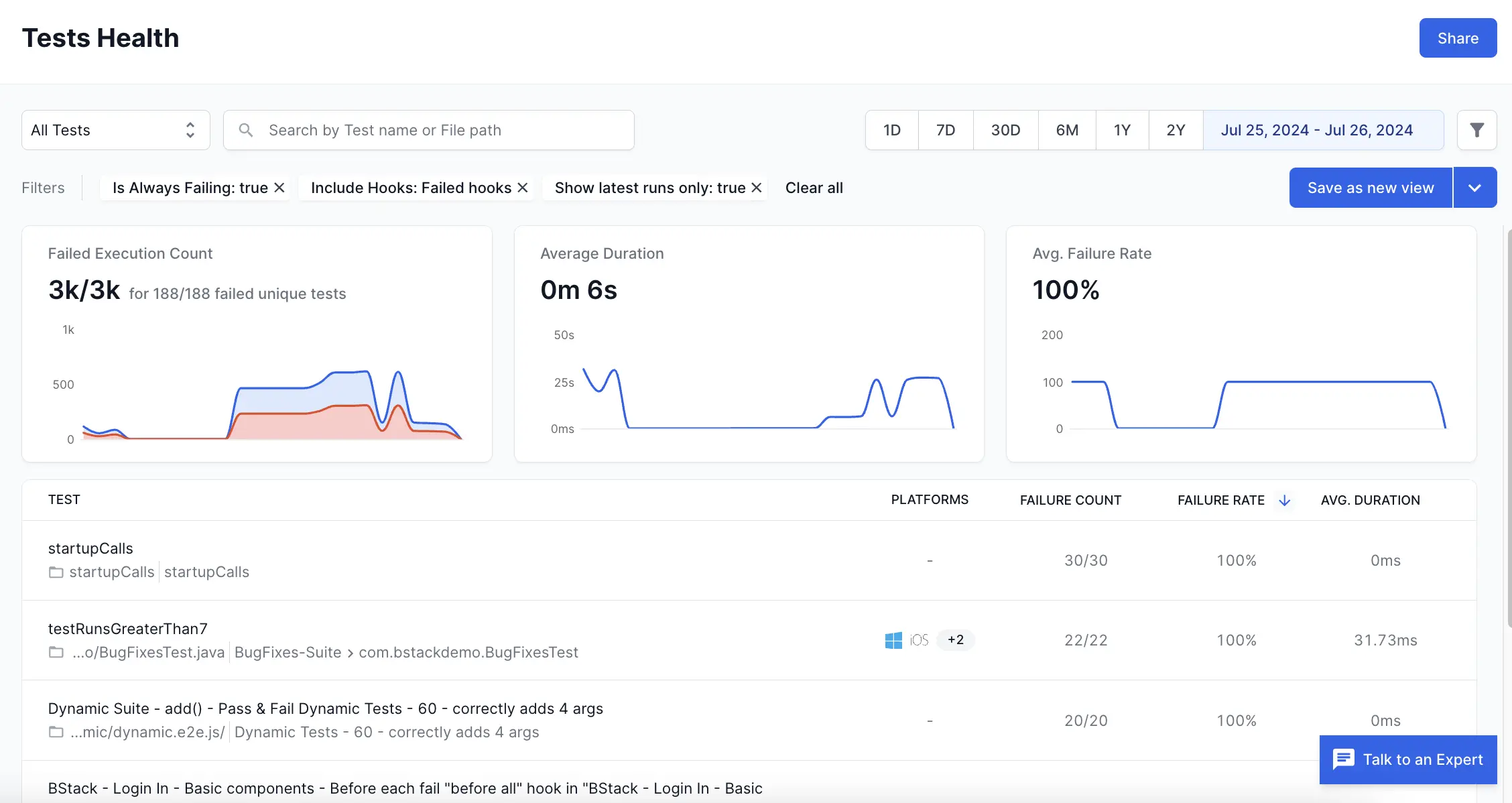

- Click View tests to get to the tests that contribute to the always failing count.

This opens Tests Health in a new tab with the applicable filters. On Tests Health, you can view the individual tests that always fail to further investigate what caused the test failures.

Widget configuration - Always Failing

You can configure the following options in the Always Failing widget:

-

Widget name: A suitable name to easily identify the purpose of the widget.

-

Description: An optional widget description to explain the purpose in detail. A user can view this description by hovering over an info icon on the widget and gain valuable context about the widget.

-

Chart Summary: A toggle to show or hide the chart summary, a concise banner that displays summarized information on your Always Failing widget. In the Always Failing widget, you can choose Unique Tests Impacted or Average as the chart summary. By default, the Always Failing widget displays Unique Tests Impacted as the chart summary. You can also choose to show or hide this chart summary. Chart summary is available only on widgets with a single segment.

-

Number/Percentage: The Number/Percentage setting lets you choose the unit of measurement on the Y-axis: either the total number of always failing test runs or the percentage of always failing tests against total test runs. By default, it shows the absolute number of always failing test runs. However, if your daily number of test runs varies, you can switch to “Percentage” for a more precise analysis.

-

Segments: Add up to five segments in the Always Failing widget using the Add segment option. These segments appear as separate line charts in the widget. Segments should be used along with filters. You can use various filters in each segment to compare different projects, builds, users, etc.

-

Filter: You can add a filter to include only the data you want in a particular segment. The parameters by which you can filter data are Projects, Unique Build Names, Users, Build Tags, Test Tags, Hooks Visibility, Host Names, Folder names, Device, OS, and Browser.

You can also import filters from other widgets to avoid duplicate efforts.

Sample use cases

You can use the Always Failing widget to track and compare the tests that always fail, in several aspects of your testing organization. Here are a few sample use cases to get you started:

Analyze tests that always fail in different modules or teams

You can configure separate segments for different modules or teams in your test suites. You can use segments in combination with the following filters to identify modules and teams:

- Unique build names filter to identify build names that belong to a particular module or team.

- Users filter to differentiate between team members who triggered the build.

- Folder names filter to identify modules based on folders in your test repository.

- Build tags and Test Tags that represent team or module information.

Consider the following example in which the Always Failing tests in three modules are compared.

Here, the three line charts represent Module A (purple line), Module B (blue line), and Module C (yellow line) in a test suite. Such a graph can quickly tell you that Module A has the highest number of tests that always fail. Module C closely follows Module A in the number of Always Failing tests but tends to have a slightly lesser number. Module B has the least number of Always Failing tests. Using this insight, you can focus on Module A and C and find out the reasons for the persistently high number of Always Failing tests using the drill-down feature. In many cases, you can apply best practices followed by top-performing teams or modules to reduce the number of Always Failing tests in other teams or modules.

To create the above widget, in the following sample configuration, different Folder names filters are configured on each of the three segments that define Module A, Module B, and Module C.

Analyze tests that always fail on different platforms

You can measure the tests that always fail across multiple devices, OS, and browser combinations using the Always Failing widget. This can be achieved by configuring separate segments for each of the OS-device-browser combinations that you want to track.

In the following example, Always Failing tests run on three different browsers are compared.

Here, the three line charts represent the trend of Always Failing tests run on Browser A (purple line), Browser B (yellow line), and Browser C (blue line). This graph informs you that there is a higher number of Always Failing tests on Browser A than on the other browsers. Browser C has the least number of Always Failing tests and Browser B falls somewhere in between when it comes to the number of Always Failing tests. You can analyze deeper using the drill-down feature. Using these insights you will be able to concentrate on reducing the number of Always Failing tests on Browser A first.

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

Thank you for your valuable feedback!